GitHub Actions Self‑Hosted Runner: Pricing & Upgrade

Two things just shifted under your feet. First, GitHub introduced a $0.002/minute cloud platform charge that applies to private‑repo workflows running on your GitHub Actions self-hosted runner, effective March 1, 2026. Second, on March 16, 2026, GitHub will enforce a minimum runner version—v2.329.0—at configuration/registration time. If you provision runners from golden images, bake AMIs, or roll your own ARC images, this is the moment to tighten your pipeline and cost model.

What changed in March 2026 (and what you can’t ignore)

Let’s anchor the dates. As of March 1, 2026, self‑hosted usage on private repositories incurs a $0.002/min charge as part of GitHub’s platform fee. Hosted‑runner list prices were lowered on January 1, 2026, and already include that platform charge. Public repos remain free. GitHub Enterprise Server isn’t affected by these pricing changes. Separately, on March 16, 2026, GitHub blocks registration of self‑hosted runners older than v2.329.0. This enforcement happens when you run the config script; existing, already‑registered runners still need to follow update policies, but the immediate gate is at registration.

Why does this matter? Because fleets break at their seams—golden images, base Docker layers, and forgotten bootstrap scripts. If any step installs an older runner before config, registration fails. That’s a service outage you can schedule—or stumble into.

Remaining brownout windows you can still use

GitHub scheduled brownouts to surface breakage in safe bursts. As of today (March 5, 2026), the remaining blocks for sub‑v2.329.0 registration are scheduled for March 6 (20:00–00:00 UTC), March 9 (18:00–22:00 UTC), and March 13 (12:00–16:00 UTC). Use these windows to run controlled canary provisioning and verify that every path—manual, Terraform, auto‑scalers—pulls v2.329.0+ before configuration.

Why the GitHub Actions self-hosted runner change hits your budget

The new pricing nudges teams to do real math. If your org ran 600,000 minutes/month on self‑hosted for private repos, that’s $1,200 in platform charges. Add your cloud bills and engineering time, and suddenly GitHub‑hosted runners—now cheaper than 2025 rates—may be competitive for bursty or specialized workloads. Conversely, steady high‑throughput shops with warm caches and co‑located artifact stores can still win with self‑hosted. The trick is segmenting workloads by cost‑to‑completion, not ideology.

An example split that consistently pays off: keep long‑running, IO‑heavy builds on self‑hosted with local caches and package mirrors; push short lived, embarrassingly parallel test shards and one‑off PR checks to discounted hosted runners where queue depth and cold‑start variance matter more than pennies per minute.

A pragmatic upgrade checklist (30–90 minutes)

Here’s a fast, safe path to get every new runner over the v2.329.0 line without bricking your fleet during peak hours.

- Locate all install points. Search your IaC, Packer templates, Dockerfiles, and bash/PowerShell bootstrap scripts for where you download and install the runner. Centralize version control in one place.

- Pin and verify. Explicitly pin v2.329.0 or later by SHA or exact tag in your install logic. Run a checksum. Don’t rely on “latest.”

- Order matters. Ensure the runner binary is updated before you invoke the config/registration step. For immutable images that pre‑bake the runner, rebuild the image and roll forward.

- Rotate secrets. Registration failures often mask token scope issues. Confirm repo/org/enterprise registration tokens, and shorten TTLs to reduce blast radius.

- Canary with brownouts. During the remaining brownout windows, intentionally run provisioning for each environment (Linux containers, Windows VMs, macOS hosts) and validate that registration completes. Capture logs and exit codes.

- Label hygiene. List labels from live runners and ensure they match targeting in your YAML. Upgrades or image changes sometimes drop custom labels—forcing jobs to the wrong arch or pool.

- Auto‑update policy. After registration, keep runners within GitHub’s freshness window. If you disable auto‑updates, put a weekly job in place to roll instances and re‑bake images.

- ARC or Scale Set Client users. Update your base images and Helm values (ARC) or provisioning hooks (Scale Set Client) to ensure v2.329.0+ is present at container/VM creation time.

If you want a deeper, date‑by‑date run‑book, see our field guide: a March 2026 runner plan with war‑room checklists.

Quick answers to hot questions

Will my existing registered runners stop working on March 16?

The enforcement gate is at configuration/registration time. But don’t get complacent—runners that drift too far behind can be throttled or fail to accept jobs. Keep them current and plan periodic rebuilds.

Does this affect GitHub Enterprise Server (on‑prem)?

No. The self‑hosted platform fee doesn’t apply to GHES, and the registration‑time minimum in March targets GitHub.com. Still, track runner freshness on your own cadence.

Are public repositories still free?

Yes. Public‑repo workflows are exempt from the new platform charge for both hosted and self‑hosted runners. For open source, you’re safe—but keep runners patched.

What about Windows and macOS images?

GitHub added new Windows and macOS images in February, including options to validate newer toolchains ahead of broader migrations. If you rely on platform‑specific SDKs, schedule compatibility runs now.

Cost‑control playbook: cut minutes, not momentum

You can shave 20–40% off minutes without slowing developers if you target the right levers. Here’s where I usually start.

- Concurrency caps with intent. Set per‑repo and per‑queue concurrency to avoid stampedes from flaky reruns. Then whitelist hot paths (release branches, tag builds) to burst when it truly matters.

- Smarter matrices. Collapse redundant OS/arch combinations for libraries that don’t need them on every PR. Run the full matrix nightly on main.

- Selective test shards. Fail fast: run smoke tests and static checks on every PR; gate heavy integration suites behind changed‑path filters.

- Cache like you mean it. Prime dependency caches on warm runners. For package‑heavy jobs, host mirrors in the same AZ/VPC to turn network time into disk time.

- Artifact diets. Upload fewer, smaller artifacts; switch to non‑zipped artifacts when supported to avoid double compression and wasted CPU.

- Bring in hosted runners where they win. Short‑lived PR checks benefit from GitHub‑hosted’s queue depth and image freshness—especially now that list prices are down.

- Right‑size your images. Keep language runtimes and SDKs layered and shared. For containers, split “base build” and “job tools” layers to maximize cache hits.

Need a refresher on modern runtime timelines while you’re at it? Our Node.js EOL 2026 playbook helps teams avoid silent breakage from aging Actions and toolchains.

Architecture in 2026: ARC, Scale Set Client, or DIY?

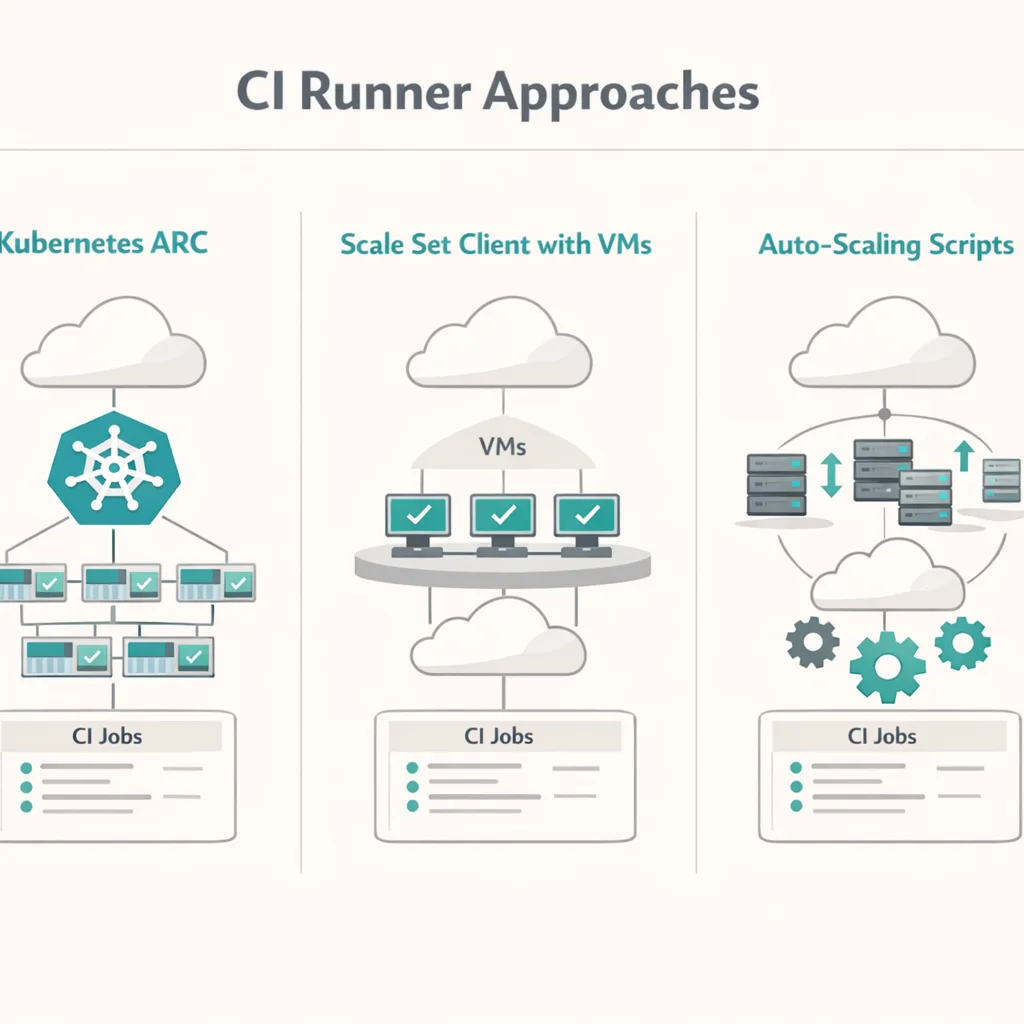

There are three viable models for serious fleets:

- Actions Runner Controller (ARC). The Kubernetes standard. Great for teams already operating K8s, with mature autoscaling and isolation. You’ll manage Helm, images, and metrics—but you inherit a proven pattern.

- Runner Scale Set Client. New Go‑based client that hits the same scale set APIs as ARC, without Kubernetes. Ideal if you prefer VM auto‑scalers, cloud functions launching ephemeral runners, or bare‑metal labs. You own the provisioning logic but reduce moving parts.

- DIY scripts and queues. Bash/PowerShell plus cloud APIs can work for small teams or edge cases, but the maintenance tax grows fast. If you’re here, at least add health checks, backoff, and a queue.

My rule: if you’re over ~200 concurrent jobs at peak, adopt ARC or the Scale Set Client. Below that, pick the simplest path that keeps observability, isolation, and cost controls tight.

Reliability traps I keep seeing (and how to avoid them)

Upgrades surface latent debt. These are the potholes that crater pipelines at exactly the wrong time.

- Image drift. Golden images that pre‑install the runner go stale. Automate nightly rebuilds and sign images. Bake the runner late in your image to speed rebuilds.

- Label skew. After upgrades, missing or renamed labels cause jobs to run on the wrong arch (hello, Apple silicon vs Intel). Assert labels in a startup script and fail fast.

- Action pinning. Pin actions by SHA, not just tags. Immutable actions and explicit allowlists reduce supply‑chain risk and flakiness from surprise updates.

- Data egress surprises. Self‑hosted runners in locked‑down networks need explicit egress to GitHub endpoints, artifact stores, and package registries. If you’re building agentic or AI workflows, lock down outbound calls. Our guide on egress firewalls for AI agents shows a sensible pattern.

- Metrics blind spots. Without per‑job cost and wait time, you’ll optimize the wrong thing. Pipe job events to your SIEM or data lake; alert on queue time and cache miss spikes.

The 5×5 upgrade drill (five teams, five days)

Here’s a structure we use with clients to land upgrades quickly without heroics.

- Day 1 — Discovery. Inventory all runner pools, labels, images, and install paths. Flag any pre‑baked runner versions below v2.329.0.

- Day 2 — Pin and bake. Update scripts and images to pull v2.329.0+ before config. Rebuild Linux, Windows, and macOS images. Add checksums and signature verification.

- Day 3 — Brownout canaries. During a brownout window, exercise each provisioning path. Capture failures and fix in place.

- Day 4 — Cost cuts. Apply matrices, caching, and artifact changes to shave minutes. Trial hosted runners for a hot PR path and compare real cost‑to‑completion.

- Day 5 — Observability. Ship job telemetry. Set concurrency and rerun policies. Document roll‑forward and rollback.

People also ask

How do I forecast the new cost for my team?

Pull last month’s Actions usage report, isolate private‑repo self‑hosted minutes, multiply by $0.002, then model 20–40% reductions from matrix and caching tweaks. Compare that to discounted hosted‑runner rates for the same workloads. Revisit monthly.

Should I move everything to hosted runners now that prices dropped?

No blanket moves. Hosted shines for spiky PR checks and short tests. Self‑hosted wins for long jobs, heavy caches, and when data gravity favors proximity to your internal registries and artifact stores.

What labels should I standardize on?

Keep labels semantic and minimal: os, arch, toolchain tier (e.g., xcode‑26, vs‑2026), and performance class (small/standard/large). Enforce them in startup scripts to avoid accidental drift.

What to do next (this week)

- Before March 9: rebuild images and update all provisioning to v2.329.0+; run a canary during a brownout.

- By March 13: lock label standards, turn on SHA pinning, and write a 1‑page rollback plan.

- By March 16: verify every path (ARC, Scale Set Client, bespoke scripts) registers cleanly.

- This month: benchmark one hot PR job on hosted runners and keep whichever option wins on cost‑to‑completion.

If you want hands‑on help, our team does this work every week. Explore CI modernization services, check engagement pricing, browse our recent delivery stories, and reach out via one quick form. You’ll ship faster—and sleep better—before the March 16 cutoff.

Here’s the thing: the combination of a small per‑minute platform fee and a hard registration floor forces discipline. Embrace it. Get your GitHub Actions self-hosted runner estate reproducible, upgradeable, and observable. Then let data—not assumptions—decide where hosted runners fit. Do that, and March 2026 becomes just another calm, boring deploy week.

Comments

Be the first to comment.