GitHub Actions Pricing 2026: What Teams Should Do Now

Let’s get straight to it: GitHub Actions pricing 2026 isn’t a single switch you flip—it’s a set of overlapping changes that started on January 1, 2026, continue on March 16, 2026, and may add another cost lever later this year. If you lead delivery or platform engineering, the decisions you make this month will affect both your cloud bill and your ship cadence for the rest of 2026.

What actually changed in 2026?

Three items matter for most teams right now:

First, hosted runner prices decreased on January 1, 2026—GitHub’s own messaging cites up to a 39% reduction depending on machine type. That shift alone makes some self‑hosted fleets look less attractive on a pure cost basis, especially for general Linux and Windows workloads that don’t need exotic hardware.

Second, GitHub announced a $0.002‑per‑minute “Actions cloud platform” charge for self‑hosted usage, originally targeted for March 1, 2026. After community feedback, GitHub said they’re postponing that self‑hosted portion to re‑evaluate the approach. As of March 12, 2026, there isn’t a new enforcement date published—so treat it as “TBD but plausible.” Plan for it; don’t assume it will never land.

Third, there’s an operational deadline you can’t ignore: starting March 16, 2026, GitHub will block configuration of self‑hosted runners older than v2.329.0. If you redeploy runners from old images, they’ll fail to register. There were brownouts through late February and early March to help teams spot outdated instances; full enforcement begins on March 16.

There are two related timeline nudges you should also bake into your plan. Runners are shifting their embedded Node default to Node 24 in early March, and Node.js 20 reaches end of life on April 30, 2026. If your custom Actions or pinned third‑party Actions still rely on Node 20, you’ll feel it in pipeline breakage, not just release notes.

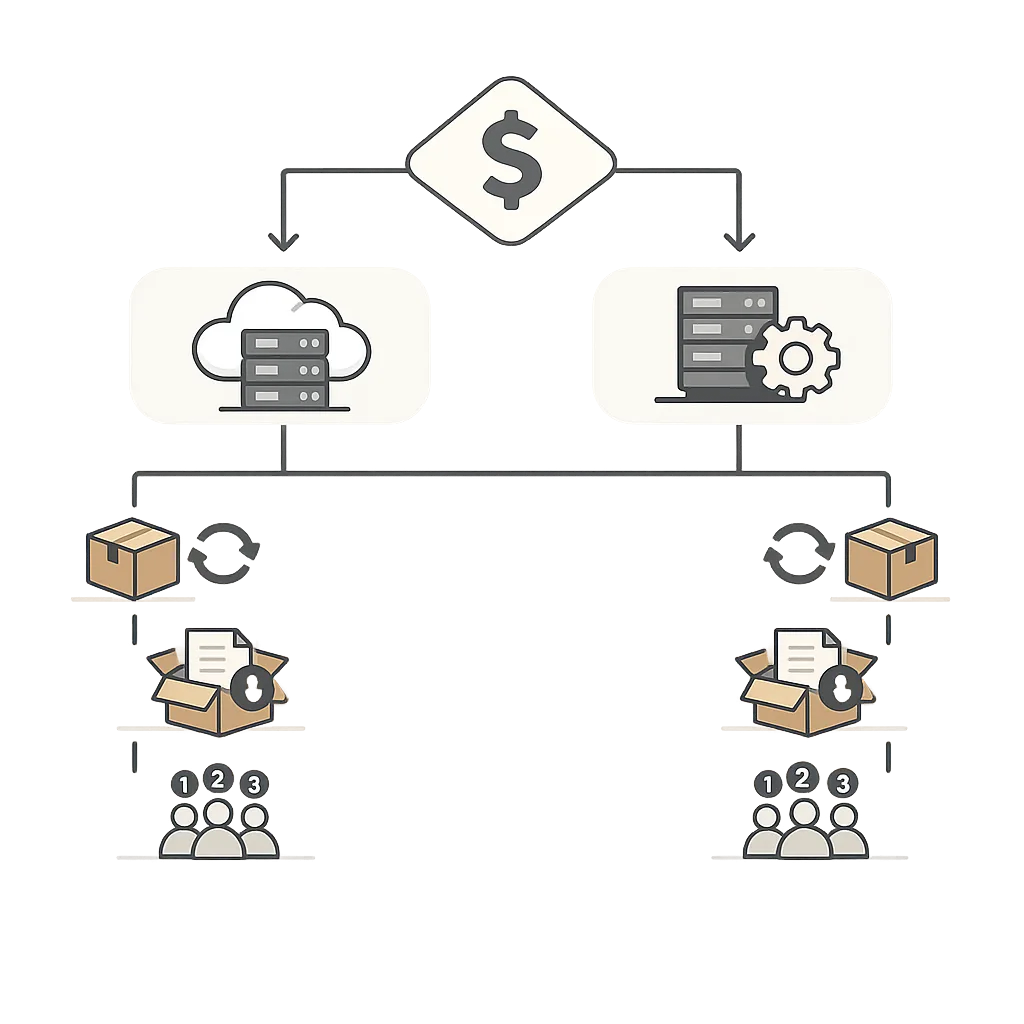

GitHub Actions pricing 2026: how to model your bill

Here’s the thing: a lot of teams try to predict CI/CD costs by eyeballing a few popular workflows. That’s how you miss the long tail of scheduled jobs, flaky retries, and artifact bloat. Use a small set of reliable measures:

- Minute volume by runner type and repo visibility (public vs private/internal). Break out Linux, Windows, macOS, and any larger machine sizes.

- Concurrency and queue time distributions. Median plus P95 tells you if you’re buying minutes or buying time-to-merge.

- Artifact/storage time series and retention. Accidental hoarding is common and expensive.

- Action mix (first‑party vs third‑party vs custom) and Node engine requirements.

Then build a simple model with three levers:

- Hosted minutes cost after the January 2026 reduction (varies by machine size).

- Self‑hosted “infrastructure” cost: your VM/container, network egress, ephemeral disk, plus platform overhead you’d otherwise ignore (image build time, base image pulls, etc.).

- A potential self‑hosted platform fee at $0.002/min if/when GitHub re‑introduces it. Keep it as a toggle in your spreadsheet.

Run two scenarios for each critical repo: “status quo” and “hosted‑first.” In 2026, the hosted‑first scenario often wins for day‑to‑day Linux/Windows jobs because of the price cuts and zero fleet maintenance, while self‑hosted still shines for specialized builds—think Android, iOS signing, GPU/ARM cross‑compiles, or aggressive Docker layer reuse where cold‑start penalties dominate.

A practical example you can copy

Let’s say your org uses 120,000 Linux minutes, 10,000 Windows minutes, and 3,000 macOS minutes per month, with 70% in private repos. Create a table that:

- Multiplies minutes by the current hosted rates for each machine size you use.

- Separately sums the minutes you run on self‑hosted hardware and applies your true infrastructure cost per minute (cloud VM + storage + amortized image‑build time).

- Adds a switchable line item for a $0.002/min platform fee only on self‑hosted minutes, so finance can see upside/downside.

Now sensitivity‑test the model with two dials: a 20% cut in minutes from cache tuning and a 25% move of generic CI jobs back to hosted runners. Most teams I’ve advised find that these two changes alone cover the potential platform fee exposure and reduce queue spikes without sacrificing throughput.

Should you self‑host in 2026?

Self‑hosting still makes sense when:

- Your jobs need capabilities unavailable or cost‑inefficient on hosted (custom kernel modules, nested virtualization, GPUs, specific Android SDK images, Apple codesigning at scale).

- Your queue SLOs are tighter than what you’re seeing on peak hosted capacity, and you can’t afford bursty wait times before a Friday cutoff.

- Your data governance requires private network paths, VNET/VPC injection, or particular secrets management patterns.

But there’s a catch: every hour your platform team spends babysitting runners, image drift, and auto‑update breakages is time they’re not improving test pyramid health or build graph structure. The January 2026 hosted price cuts tilt the equation; if you haven’t re‑run your buy‑vs‑build math since then, you might be paying an “operational tax” to save pennies.

Upgrade deadlines you can’t ignore

Mark these on the wall calendar—this isn’t optional:

- March 16, 2026: GitHub blocks configuration of self‑hosted runners older than v2.329.0. Bake the version into your AMIs, container images, and bootstrap scripts. Don’t rely on auto‑update to rescue golden images.

- Early March 2026: Runners switch their default embedded Node to Node 24. Audit custom Actions and ensure third‑party Actions you pin have Node 24 builds.

- April 30, 2026: Node 20 EOL. If you still run “runs.using: node20,” move now. Your build might keep limping along… until it doesn’t.

Need a hand pressure‑testing your plan? Our field notes on the 2026 changes and mitigations are collected here: what to change in your GitHub Actions usage, our take on self‑hosted runner pricing and next steps, and an accelerated Node 20 EOL upgrade plan.

The Cost Control Ladder: five moves that work

These are the changes I’ve seen consistently deliver results without drama:

- Kill dead minutes. Add a first step that fails fast on changed‑paths. If docs changed, don’t run the full matrix. If only a frontend folder moved, skip backend integration tests.

- Cache like you mean it. For Node, Python, Java, and Android, audit cache keys and restore steps. Missed keys and oversized caches both waste minutes.

- Right‑size the runner. Most jobs don’t need the largest machine size. Start small, scale up only for specific workflows with proven CPU/memory headroom issues.

- Split build from test. Parallelize intentionally. Fat single jobs hide slow steps and push you into oversized runners.

- Artifact retention hygiene. Set defaults to 3–7 days for ephemeral artifacts. Keep long‑term artifacts in object storage with lifecycle rules, not in Actions.

A 30‑60‑90 day blueprint

Days 1–30: Instrument and stabilize

Turn on usage reports at the org level, then inspect the top 20 workflows by minutes. Add a baseline step that prints runner version, image digest, and key tool versions at job start so you catch drift during March’s enforcement window. Confirm your self‑hosted images deploy with runner v2.329.0 or later and that auto‑updates aren’t silently blocked by permissions.

In parallel, prune redundancy: remove duplicate matrix axes, collapse near‑identical jobs, and cut artifact retention. You can usually reclaim 10–20% of minutes here with zero risk.

Days 31–60: Rebalance hosted vs self‑hosted

Move generic Linux and Windows CI back to hosted runners where the January 2026 price drop gives you the most bang for the buck. Keep self‑hosted for jobs where stable caches, specialized toolchains, or signing workflows change the economics. If you use Kubernetes‑based runner autoscaling, freeze image updates for a week after you land v2.329.0 to watch real traffic under enforcement.

Codify this with a routing convention: tags like runs-on: [self-hosted, heavy-build] for the jobs that truly need your fleet; everything else defaults to hosted.

Days 61–90: Lock in wins

Add policy‑as‑code to guardrails: required caching keys for big languages, enforced max artifact sizes, and workflow dispatch rules that prevent full pipelines on trivial PRs. Then sit down with finance to align on a usage envelope and alerting thresholds. If GitHub re‑introduces a self‑hosted platform fee this year, you’ll already have a tested toggle in your model and a plan to shift more workloads back to hosted without chaos.

People also ask

Is self‑hosted still cheaper in 2026?

Sometimes. If your workloads reliably benefit from warm caches, large ephemeral storage, or hardware GitHub doesn’t offer at the right price (GPUs, niche macOS needs), you can still beat hosted on cost and latency. But after the January price cuts, many teams find hosted cheaper for baseline CI—particularly when you factor in the engineering time to tend a fleet.

Does any fee apply to public repositories?

GitHub has consistently said that standard usage on public repositories remains free. If you’re running most work publicly, your optimization priority is performance and queue time rather than price.

How do I estimate artifact costs without perfect data?

Start with a 30‑day average of artifact size and retention. Multiply by the retention days and the number of workflow runs per day. That gets you close enough to decide if aggressive cleanup policies will move the needle. Most teams discover a handful of outsized artifacts that skew the curve.

What happens on March 16, 2026?

Self‑hosted runners below v2.329.0 will be blocked at configuration. If your autoscaler or image pipeline still stamps older versions, they’ll fail to register. Bake the version into your images and verify during build time; don’t rely on runtime auto‑upgrades.

Workflow and dependency hygiene for March and April

Two quick mitigations reduce surprise breakage this spring:

- Pin and test Actions. Replace floating versions with SHAs or semver ranges that you’ve validated against Node 24.

- Surface engines in CI logs. Print

node --version,java -version,python --version, andruby --versionat job start. When something flips under you (base image updates, toolchain changes), you’ll have receipts.

If you need a structured path, we published a zero‑drama Node 20 upgrade checklist here: 30‑day Node 20 EOL plan.

Hosted vs self‑hosted: performance realities

Hosted runners start clean; that’s great for security and reproducibility, but it penalizes large monorepos and heavy Docker workflows. You can mask that with better layer caching and a two‑stage build (compile, then test on a smaller runner). Self‑hosted can feel blazing fast because caches and toolchains are already warm, but it’s easy to overestimate savings if you don’t include the cost of building, patching, and validating those base images—especially when the runner version requirement changes mid‑quarter.

One more operational truth: when incidents hit hosted capacity, self‑hosted can be a lifeboat. But you shouldn’t carry a year‑round fleet just in case. A small, well‑maintained emergency pool plus a documented cutover path is usually enough.

What to do next

- Audit your runner fleet and pin v2.329.0+ in images before March 16, 2026.

- Re‑model your bill with January’s hosted price cuts and a toggle for a possible self‑hosted platform fee at $0.002/min.

- Move undifferentiated Linux/Windows CI back to hosted; reserve self‑hosted for specialized workloads.

- Fix the basics: changed‑paths filters, cache keys, artifact retention, job parallelization.

- Align finance, platform, and app teams on a quarterly CI budget and SLOs.

If you want a working session with our team on cost modeling or a no‑drama runner upgrade plan, see our engineering services and drop us a line via ByBowu contacts. We’ll get you from uncertainty to a predictable, fast pipeline this quarter.

Comments

Be the first to comment.