GitHub Actions Pricing 2026: What Changed, What’s Next

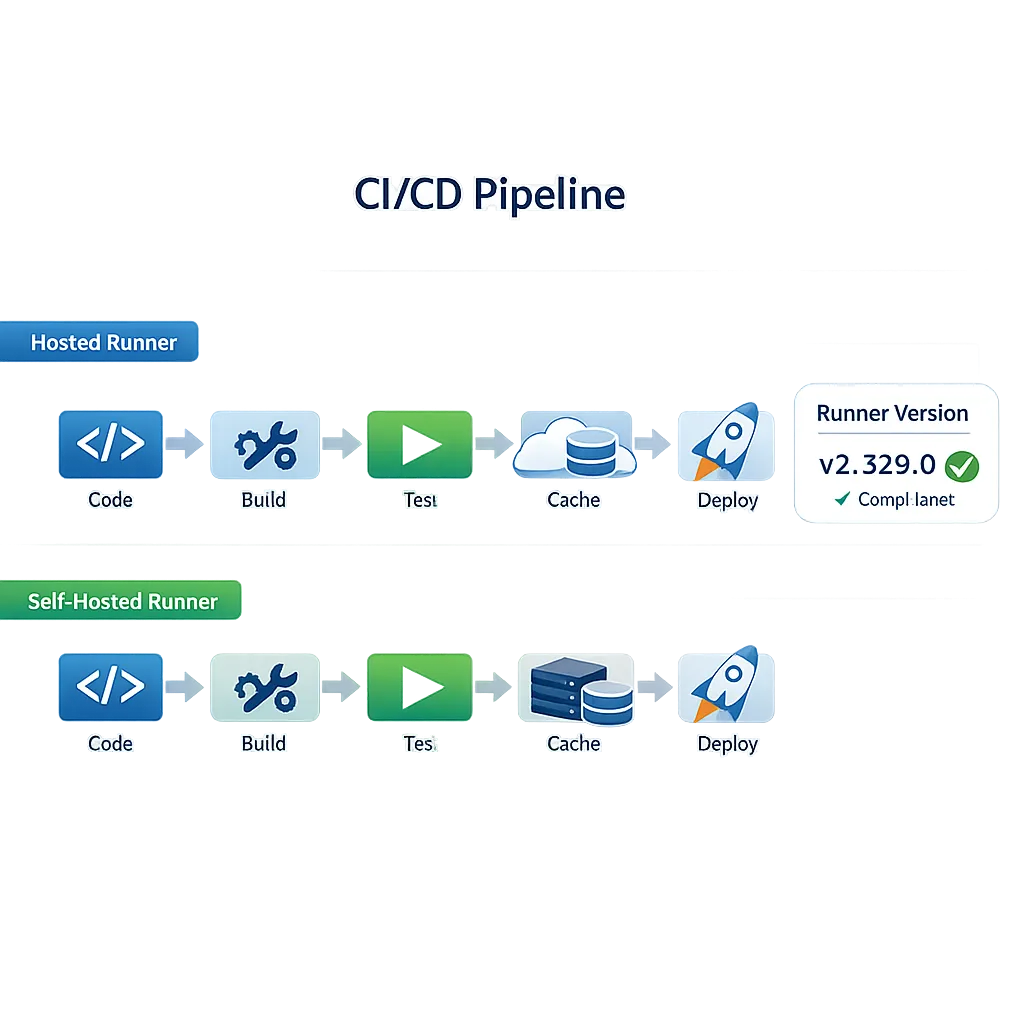

Let’s clear the fog on GitHub Actions pricing 2026. As of Friday, March 13, 2026, GitHub has already reduced rates for hosted runners, postponed the much‑debated self‑hosted fee, and is days away from enforcing a minimum runner version at configuration time. That mix of cost signals and operational deadlines is exactly how teams end up with surprise invoices or stalled pipelines. Here’s what actually happened, what didn’t, and how to tune your CI/CD so you’re faster and cheaper without breaking deploys on March 16.

First, a reality check: what changed on January 1—and what didn’t on March 1

Two things defined the conversation this quarter. One is real and already in effect; the other triggered panic, then a U‑turn.

Hosted runners got cheaper

On January 1, 2026, GitHub cut list prices for hosted runners—up to 39% depending on the machine type. If you run medium to large pipelines on standard Linux or Windows runners, you should already see lower per‑minute rates reflected in your usage reports. Free minute quotas didn’t change, so some teams are seeing meaningful savings without touching workflow YAML.

The self‑hosted platform fee was postponed

GitHub originally announced a $0.002/minute “Actions cloud platform” charge for self‑hosted runner usage starting March 1, 2026. After feedback, they explicitly postponed that billing change to re‑evaluate the approach. Translation: as of today, there is no new per‑minute platform charge on self‑hosted usage on GitHub.com. Keep your finance folks from over‑rotating—monitor the changelog, but don’t reprice your entire CI budget based on the initial announcement.

Here’s the thing: postponement isn’t cancellation. Treat it as a yellow light. The control plane that schedules and coordinates jobs costs real money to operate. Expect GitHub to come back with a revised model; design your pipelines so you can adapt if or when a platform component gets priced.

March 16 is a hard stop for outdated self‑hosted runners

Separate from pricing, there’s an operational deadline you can’t ignore: starting Monday, March 16, 2026, GitHub will block configuration/registration of self‑hosted runners older than v2.329.0. If your automation spins up runners from golden images, base AMIs, or container templates that baked in an older runner, those registrations will fail after the cutover. Brownouts have been running this month to surface breakage ahead of time—including a window on Friday, March 13.

What does “configuration‑time enforcement” mean in practice? Existing registered runners won’t suddenly stop executing just because of the minimum at registration, but any workflow that depends on creating or re‑registering runners on the fly—Kubernetes ARC pools, ephemeral VMs, spot fleets—will be blocked if the baked runner is below v2.329.0. You’ll also need to keep an eye on the separate “keep your runners reasonably up to date” policy that can pause queuing when you drift too far behind.

Action item: verify your images, install scripts, and automation now. If you maintain an ARC image, pull a recent tag that includes a modern runner. For golden AMIs, rebuild with v2.329.0 or newer and roll out gradually to your groups.

People also ask: common 2026 CI/CD questions

Will self‑hosted GitHub Actions be charged in 2026?

As of March 13, 2026, the proposed $0.002/minute charge is postponed. There’s no active platform fee on self‑hosted usage on GitHub.com today. Keep tracking the official changelog for any new date or revised structure.

How do I estimate my Actions bill now?

Start with your 2025 monthly usage reports to baseline minutes by runner type and repository visibility. Then re‑price those minutes using today’s hosted runner rates (after the January 1 reductions). Simulate best‑case with higher cache hit rates and worst‑case with cache miss penalties. If you’re considering moving jobs from self‑hosted to hosted to exploit the price cuts, run A/B telemetry for a week: compare queue times, cache effectiveness, and job success rates, not just raw per‑minute math.

What breaks if I ignore the runner update?

Anything that registers or re‑registers a runner after March 16 using a too‑old binary. That includes auto‑healing pools, image‑based scaling, and one‑shot ephemeral runners. You might not notice immediately in steady‑state, then suddenly fail on a scale‑out event during a peak deploy. Don’t leave this to a Friday night incident.

Let’s get practical: a no‑drama CI/CD cost playbook

This is the same process I run with teams that have hundreds of workflows and a mix of hosted and self‑hosted fleets. It’s fast, boring, and it works.

1) Inventory by job class, not by repo

Group jobs into classes with similar runtime characteristics: unit test (short, CPU‑bound), integration (long, I/O‑heavy), packaging (cache‑sensitive), security scans (burst CPU + network), and release (serial + gated). This lens exposes where hosted runners’ price cuts actually matter and where self‑hosted keeps an edge.

2) Establish cache economics

Cache effectiveness is the silent multiplier. Measure hit/miss rates for dependency caches (npm, Gradle, Maven, pip), Docker layer reuse, and Actions cache steps. A 15–20% cache hit improvement often offsets a nominal per‑minute increase—and shrinks wall‑clock time. Use deduplicated keys, pin tool versions to reduce churn, and keep artifacts close to runners when possible.

3) Pin your toolchains

Drift is expensive. Lock versions for compilers, SDKs, and package managers. Keep a controlled path to updates via weekly PRs. This reduces cold starts, sledgehammers fewer caches, and makes your runs predictable. It also makes the March 16 runner binary update trivial because your images aren’t doing surprise upgrades on first boot.

4) Right‑size the runner for each job class

Don’t pay for idle cores. Unit tests often don’t parallelize past a small threshold—use 2‑core Linux. Heavy integration tests that hit multiple services might justify 8–16 cores on bursty days. If you need macOS or Windows, batch those expensive jobs and trim setup time ruthlessly.

5) Measure queue time and fail‑fast behavior

Teams fixate on per‑minute price and forget that 90 seconds of queue time times 2,000 jobs is real money. Hosted runners may reduce queueing at peak if your self‑hosted fleet is undersized. Conversely, if you live on self‑hosted for data locality or GPUs, keep headroom (20–30%) so you don’t pay in developer time.

6) Prove or disprove hosted migration

Pick two job classes and run them on both hosted and self‑hosted for five business days. Compare not just cost per minute, but throughput, failure rates, and time to green. If hosted wins on stability and total time, take the win. If self‑hosted still wins for tight caching or network adjacency, double down—but automate runner updates so you never miss a policy change again.

Architecture patterns that cut minutes without slowing teams

You don’t need a platform rewrite to take 20–40% off your CI minutes. These patterns pay for themselves fast.

Parallelize where it’s real: shard large test suites by file size or historical duration, not alphabetically. Promote a small “smoke” path to run on every push (<60 seconds), with heavier suites on PR labels or scheduled runs. Use dependency graphs to skip jobs when unchanged. For monorepos, treat each package with its own cache scope and test shards so a single large change doesn’t invalidate everything.

Optimize Docker builds: multi‑stage Dockerfiles with stable base layers radically improve cache hits. If you use hosted runners, align base images with what GitHub pre‑caches; if self‑hosted, pre‑warm layer caches across the fleet. Push/pull only what you must. For SBOMs, generate once and reuse downstream steps.

Move “expensive but infrequent” to scheduled: full end‑to‑end tests, deep SAST/DAST configurations, and platform matrix builds don’t need to run on every commit. Run a minimal gate on PRs, batch the heavy stuff nightly, and page the team only for real regressions.

Watchlist: Node.js runtime changes inside Actions

There’s a quiet but important runtime shift underway in the Actions ecosystem: Node.js 20 support for JavaScript actions is being marked for deprecation around June 2, 2026 in recent runner notes, with Node 22 ascendant. If your workflows depend on third‑party actions pinned to node20, you’ll want to audit and plan upgrades or forks before that date. This transition often flies under the radar until a dependency breaks, so pair it with your runner update work.

If you need a deeper migration plan for application codebases moving off Node 20, use our field‑tested guides to sequence work without downtime: start with Node.js 20 EOL: A Zero‑Downtime Upgrade Plan and the fast‑track checklist in Node.js 20 EOL: Your 30‑Day Upgrade Plan. Even if your CI runner changes go smoothly, you don’t want a surprise runtime cutoff to stall your release week.

A focused checklist for this week

Here’s a tight, 60‑minute checklist you can hand to your platform team. No meetings required.

- Find and fix old runner binaries: search your golden images, AMIs, containers, and setup scripts for

actions-runner-*packages below v2.329.0; rebuild and roll them out today. - Run a brownout rehearsal: pick a non‑prod org and block runner registration for older versions during a lunch window. Confirm your auto‑scaling recovers cleanly with updated images.

- Pin toolchains and caches: lock SDKs and package managers; stabilize cache keys; cut cold starts by pre‑installing heavy toolchains in your images.

- Reprice two job classes: take January usage reports and recompute with the post‑reduction hosted rates; propose an experiment to shift those jobs for five business days.

- Set up usage alerts: soft budgets for Actions minutes by org and runner type; notify in Slack on 80% threshold so finance hears from you before the invoice arrives.

Cost scenarios: when hosted wins, when self‑hosted wins

Hosted wins when you’re compute‑bound with weak caches, you need macOS or Windows jobs in small bursts, or your existing fleet queues at peak. Price cuts plus zero management overhead is hard to beat.

Self‑hosted wins when you’ve engineered for locality—artifact stores next to runners, dependency mirrors on the same subnet, and container layer caches shared across a pool. It also wins when you need GPUs or exotic architectures. But with self‑hosted, your operational hygiene is part of the deal: automate runner upgrades, monitor registration failures, and keep goldens fresh.

Where teams stumble (and how to avoid it)

Unpinned dependencies burn minutes. A single “latest” tag cascades into cache misses across thousands of runs. Fix it once by pinning, and your cost graph drops without touching pricing.

Ephemeral runners that aren’t truly ephemeral waste money. If your job spends 90 seconds bootstrapping the environment and 30 seconds doing real work, you’re paying for setup, not value. Push common setup into the image, and collapse your “time to first test” to under 15 seconds.

Over‑sharding is a trap. Sharding test suites to 32 runners looks speedy but can raise total minutes if startup overhead dominates. Benchmark three shard counts and pick the elbow of the curve.

How we help clients make this boring

If you’d rather not babysit YAML and image pipelines, we’ve productized this work. Our team can audit your workflows, right‑size runners, and build goldens that pass security muster and stay current automatically. See how we’ve tuned pipelines for high‑change SaaS and mobile teams in our client portfolio, and get a sense of our typical engagement in platform engineering services. If your finance lead wants a fixed‑fee plan, our pricing page outlines common bundles that cover both the cost model and the runner upgrade work.

Zooming out: what to expect next

I don’t expect GitHub to abandon the idea of pricing part of the control plane forever. More likely, we’ll see a refined model with clearer thresholds or credits that soften the impact for small teams while aligning heavy users with actual platform costs. If that happens, you’ll be in a far better place if you’ve already cleaned up cache behavior, right‑sized jobs, and automated runner upkeep.

Meanwhile, keep one eye on runtime deprecations (Node 20 today, Node 22 tomorrow) and one on policy dates like March 16. The teams that win aren’t reacting to announcements; they’re shipping from a stable base and making incremental improvements week after week.

What to do next

- Before Monday, March 16: rebuild and redeploy any image or template that includes an Actions runner older than v2.329.0; validate registration in a staging org.

- By next Friday: run a five‑day A/B on two job classes to compare hosted vs self‑hosted under the new hosted rates; decide whether to shift those jobs.

- This month: pin toolchains, stabilize cache keys, and set usage alerts. You’ll capture quick wins regardless of future pricing tweaks.

- If you need a deeper plan or want it done for you: start here—GitHub Actions Pricing 2026: What to Change Now and the self‑hosted angle in GitHub Actions Self‑Hosted Pricing: Your 2026 Plan. Then talk to us about a two‑week tune‑up.

Keep the conversation going with your developers and your finance lead. CI/CD is one of the few places where a day’s work pays off both in dollars and in happier engineers. Make the runner update now, measure like a hawk, and let the pricing debate happen on your terms.

Comments

Be the first to comment.