GitHub Actions Pricing 2026: Postponed, Plan Now

Let’s clear the air on GitHub Actions pricing 2026. GitHub’s plan to add a $0.002/minute platform fee for self‑hosted runners has been postponed, not canceled. Meanwhile, the January 1, 2026 price cuts for hosted runners went live, and on March 16, 2026, GitHub will enforce a minimum self‑hosted runner version. If you own build pipelines, this combination means two things: you’ve got short‑term savings to capture and an operational deadline you can’t miss.

What actually changed in March 2026?

Here’s the thing: the self‑hosted fee sparked backlash and GitHub hit pause. But the hosted runner price reduction—up to around forty percent depending on machine size—took effect on January 1, 2026. Public repos are still free on standard runners, and enterprise server customers remain out of scope. The postponed self‑hosted fee means your immediate invoice shock probably didn’t happen on March 1. However, GitHub also announced stricter hygiene on runner software.

The important operational date is March 16, 2026. From that day forward, self‑hosted runners older than a specified minimum version will fail to register or be blocked. If you manage fleet runners in Kubernetes, on autoscaling VMs, or on bare‑metal, you must roll out updated runner agents before that date. Treat it like a dependency pin with a deadline. Don’t let a Monday morning deploy grind to a halt because a node group missed the upgrade window.

Budget wise, GitHub says the price model changes leave most customers even or better off—96% saw no increase in their scenarios—and they’ve emphasized that public repositories remain free on standard sizes. Read that as: small teams and open source projects are protected; heavy private workloads still need forecasting and controls.

Will GitHub still charge for self‑hosted later?

Probably. The announcement framed the delay as time to re‑evaluate the approach, not a full reversal. The core argument is that GitHub’s control plane (queuing, logs, secrets, checks, security) costs real money even if you bring your own compute. That logic hasn’t changed. If you run high‑volume private workflows on self‑hosted runners, assume a fee could land with limited notice.

So plan with a “what‑if” band. Model $0.001–$0.003 per minute across your private self‑hosted usage. At $0.002/minute, a 15,000‑minute monthly workload adds $30. That sounds small until you multiply across orgs and environments, then layer in artifact and cache storage. Remember, artifacts and caches already bill separately; the postponed charge was just for the orchestration platform. Keep an envelope in your budget for a re‑launch in 2026.

Hosted prices dropped—should we switch back from self‑hosted?

Sometimes. If your self‑hosted rationale was purely cost avoidance, the hosted price cuts might tip the math. But if you rely on GPUs, giant memory footprints, exotic toolchains, or strict network locality, you’ll still need self‑hosted. The smarter move is to right‑size your mix: let hosted runners handle generic CI paths (lint, unit tests, docs, light integration), while self‑hosted focuses on jobs that truly need custom hardware or data gravity. That split alone can knock 20–40% off total CI wall‑time and smooth bursty queues.

Use your numbers: a quick pipeline audit

Don’t guess. Pull your usage and billing reports and inventory the top 20 workflows by time and by artifact volume. Then ask three questions for each:

- Can this job run on a smaller, cheaper runner class without blowing SLAs?

- Do we need to upload artifacts at all, or can we trim size/TTL and rely on test reports only?

- Is the concurrency setting wasting runner minutes because long queues hide idle time?

In many teams I’ve coached, two patterns dominate waste: oversized runners for IO‑bound tasks and artifact bloat. Docs builds on 8‑core machines, end‑to‑end logs zipped to hundreds of megabytes, caches that never expire—these add up. Start by halving runner size on jobs with median CPU under 40%, cut artifact TTLs to seven days unless compliance says otherwise, and pin cache keys thoughtfully so they actually reuse.

GitHub Actions pricing 2026: how to model it sensibly

Here’s a simple, durable model you can keep updated regardless of what GitHub decides:

- Workload minutes: Sum minutes per job by repo, visibility (public/private), and runner type (hosted/self‑hosted). Maintain a 90‑day rolling median to avoid reacting to spikes.

- Unit prices: Track current hosted runner per‑minute by size. Track artifact and cache storage unit prices. For self‑hosted, maintain a “what‑if” column at $0.001, $0.002, and $0.003/minute.

- Blended rate: Compute (Minutes × Price) + Storage. Add a 10% buffer for retries and flaky jobs.

- Scenario switch: For each top workflow, simulate moving from self‑hosted → hosted (and vice versa). Include queue time effects—hosted runners can scale horizontally; your fleet might not.

With that sheet, the postponed fee is just toggling a column. If or when GitHub reinstates a charge, you won’t scramble—you’ll pick the scenario you already vetted.

March 16 runner enforcement: the checklist

Make this boring and reliable. It’s an upgrade, not a fire drill.

- Inventory: List every runner group and label across orgs. Include ephemeral autoscaled pools and long‑lived pets.

- Version floor: Set your IaC (Terraform/Helm) to provision the minimum required agent version or later, and enable auto‑update on boot.

- Blue/green: Deploy new runner images alongside the old ones, test a canary workflow, then phase out the old AMIs or container tags.

- Hard fail: Add a pre‑job step that asserts the runner version and exits non‑zero if it’s below the floor. Fail fast beats a mystery queue.

- Observability: Emit runner version and labels into your job logs; scrape it so you can prove fleet compliance.

Where do CI minutes really go? A practical rubric

After optimizing hundreds of pipelines, these levers deliver the fastest wins without drama:

- Right‑size runners: Start with 2 vCPU Linux for lint/docs/typescript builds and 4 vCPU for typical test suites. Only step up if sustained CPU sits above 70% for most of the run.

- Artifacts: Default to JUnit/XML reports and slim HTML summaries. Upload full logs only on failure. Cap TTL at 7–14 days.

- Caching: Key caches to lockfiles or tool versions. Bust caches aggressively on major dependency bumps to avoid mysterious perf regressions.

- Matrix restraint: Do you need Node 18, 20, and 22 for every commit? Run the full matrix nightly; keep PR checks lean.

- Concurrency caps: Stop stampeding the same environment. Use concurrency groups to throttle deploys and integration tests.

- Ephemeral self‑hosted: Auto‑scale runners per job burst, destroy on completion. Pets drift; cattle stay patched.

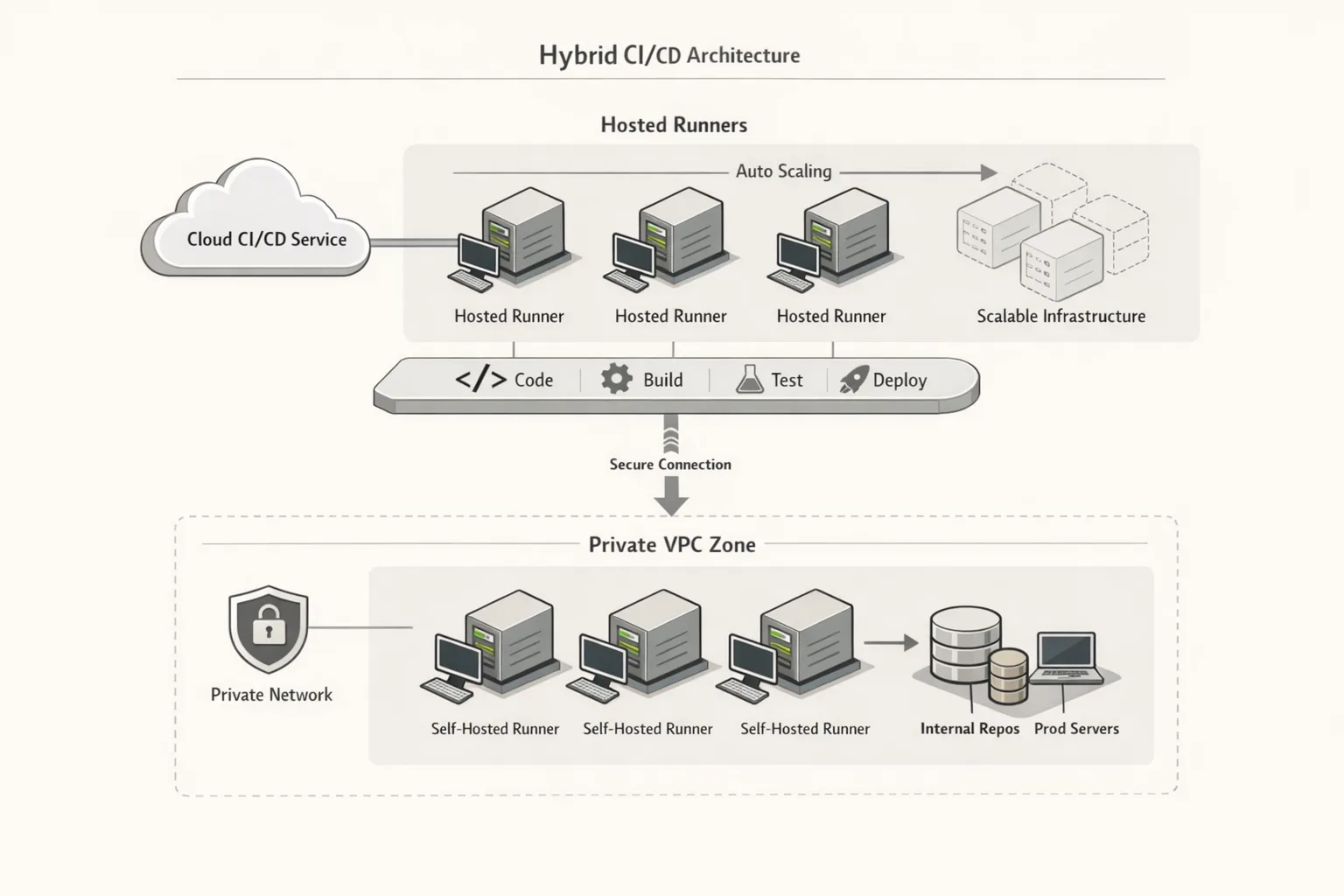

Hosted vs self‑hosted: when each wins

Use hosted runners when:

- Your jobs are CPU‑light and parallelizable (lint, unit tests, snapshots).

- You care about elasticity during PR storms more than max single‑job speed.

- You want less infra—no patch cycles, no image sprawl, no AMI pipelines.

Stick with self‑hosted when:

- You need GPUs, huge RAM, or specific CPUs the hosted catalog doesn’t offer.

- Jobs must run inside your VPC with tight egress controls.

- Large monorepos benefit from warm caches and pinned toolchains on sticky nodes.

The sweet spot is hybrid. Route generic checks to hosted for burst capacity; reserve self‑hosted for heavyweight builds and deployments behind firewalls. Label discipline and a small router action can automate this split with almost no developer friction.

People also ask

Do public repositories still run Actions for free?

Yes—standard hosted runners on public repos remain free. Larger sizes always bill. If your open source project uses jumbo runners for speed, keep an eye on usage minutes and machine classes.

What happens if I ignore the runner version enforcement on March 16, 2026?

Old runner agents will fail to register or be blocked from running jobs. Practically, that means queued workflows won’t start on those nodes. Avoid the outage: bake the minimum version into images now and roll forward gradually.

Are there alternatives to GitHub Actions for heavy self‑hosted workloads?

Sure—Jenkins, GitLab CI, and others. But you’ll still pay somewhere: compute, storage, maintenance, and the coordination glue Actions currently gives you. The real comparison is TCO and developer time, not just per‑minute pricing.

A 30‑60‑90 day playbook you can copy

Days 1–30: Stabilize and measure

- Upgrade all self‑hosted runners to the enforced minimum or later; enable auto‑update.

- Export 90 days of usage: minutes, artifact GB, cache GB, by repo and workflow.

- Downsize obvious overprovisioned runners; halve artifact TTLs; move full logs to failures only.

- Split generic checks to hosted runners; reserve self‑hosted for specialized jobs.

Days 31–60: Optimize the big rocks

- Refactor the top five workflows by cost/time: trim matrices, parallelize slow integration tests, adopt test splitting.

- Add concurrency groups to serialize deploys and shrink failed release blast radius.

- Introduce ephemeral autoscaled self‑hosted pools with version‑pinned images.

Days 61–90: Future‑proof

- Implement budget guardrails: per‑repo minute budgets with soft alerts at 80% and hard gates at 120%.

- Maintain the “what‑if” pricing column for self‑hosted at $0.001–$0.003/minute.

- Codify cost‑aware defaults in a shared workflow library (artifact TTL, cache keys, retry policy).

Common pitfalls I still see (and how to avoid them)

Artifact archives as a dumping ground. Don’t ship gigabytes of logs no one reads. Emit structured test results, attach failing logs only, and add retention policies. Developers should be able to find what they need within minutes, not dig through monolithic zips.

Matrix madness. Running every runtime and OS on every commit wastes minutes and developer attention. Keep PR checks fast; run the full matrix nightly and on release branches.

Blind retries. Automatic retries mask flaky tests and double your bill. Quarantine flakes and fail fast with actionable diagnostics.

Runner image sprawl. Ten different base images multiply patch work and surprise breakages. Standardize on two or three per language family and keep them patched weekly.

Zooming out: this isn’t just about price

Even if self‑hosted fees never return, the discipline you build now pays for itself: fewer flaky runs, smaller artifacts, and predictable cycle times. Your developers feel it as faster feedback; finance sees smoother forecasts. And if pricing changes reappear, you’re ready with toggles rather than a platform migration memo.

Related guides from our team

If you need a deeper dive on tactics and migration planning, we’ve published step‑by‑step playbooks you can use today. Start with our take on GitHub Actions Pricing 2026: What to Change Now, and if your org relies on metal or custom images, read GitHub Actions Self‑Hosted Runner Pricing: What Now. Planning a runtime refresh at the same time? Our Node.js 20 EOL zero‑downtime upgrade plan shows how to run upgrades without burning weekends.

What to do next (short list)

- Update all self‑hosted runners to the enforced minimum and bake auto‑update into images.

- Export 90 days of minutes, artifact, and cache usage; build the “what‑if” sheet.

- Right‑size runners, trim artifacts, and cap concurrency with labels you actually use.

- Hybridize: route generic checks to hosted, reserve self‑hosted for heavy or private jobs.

- Set budget alerts and merge guards to stop runaway pipelines before they burn time and money.

Want help turning this into a one‑week sprint? Our team does this routinely. See how we work on CI/CD modernization and platform engineering, browse a few representative engagements in our portfolio, or get a scoped estimate on pricing and packages. Or just drop us a line via contacts if you prefer a quick consult.

Comments

Be the first to comment.